Image credit: Unsplash

Image credit: Unsplash

Abstract

Preferences are ubiquitous in everyday life we use our own subjective preferences whenever we want to make a decision to choose our most preferred alternative. Hence, the study of preferences in computer science and AI has been very active for a number of years with important theoretical and practical results as well as libraries and datasets . In many scenarios including multiagent systems and recommender systems, user preference play a key role in driving the decisions the system makes. Thus it is important to have preference modeling frameworks that allow for expressive and compact representations, effective elicitation techniques, and effcient reasoning and aggregation

Type

Publication

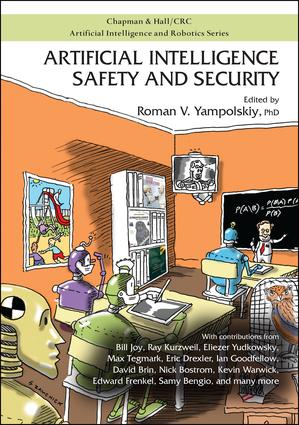

In Artificial Intelligence Safety and Security

Click the Cite button above to demo the feature to enable visitors to import publication metadata into their reference management software.

Click the Slides button above to demo Academic’s Markdown slides feature.

Supplementary notes can be added here, including code and math.